DOSeg — Code & Showcase

Repository: github.com/GZU-SAMLab/DOSeg

Environment

We recommend Python 3.10 in a conda virtual environment with CUDA-enabled PyTorch and dependencies from requirements.txt (see Installation).

Installation

A conda virtual environment is recommended.

conda create -n doseg python=3.10 -y

conda activate doseg

# If you clone this repo, please use this

pip install -r requirements.txt

# Or you can also directly install the repo by this

pip install git+https://github.com/GZU-SAMLab/DOSeg.git#subdirectory=third_party/CLIP

pip install git+https://github.com/GZU-SAMLab/DOSeg.git#subdirectory=third_party/ml-mobileclip

pip install git+https://github.com/GZU-SAMLab/DOSeg.git

wget https://docs-assets.developer.apple.com/ml-research/datasets/mobileclip/mobileclip_blt.ptDataset

Expected layout under datasets/PestCamouflage-seg/ (relative to repo root):

datasets/PestCamouflage-seg/

├── PestCamouflage_seg.yaml # YOLO data config (paths, nc, names)

├── pestcamouflage_segm.json # full COCO-style JSON (images, captions, annotations)

├── pestcamouflage_segm_train.json # train split (for embedding generation)

├── pestcamouflage_segm_val.json # val split

├── pestcamouflage_segm_train.pt # image-level text embeddings (train)

├── pestcamouflage_segm_val.pt # image-level text embeddings (val)

├── pestcamouflage_segm_train_class_embedding.pt # class-level text embeddings

├── images/

│ ├── train/ # training images

│ └── val/ # validation images

└── labels/

├── train/ # YOLO segmentation labels (*.txt)

└── val/Training reads split paths via PestCamouflage_seg.yaml.

Dataset Link: PestCamouflage

Note: The dataset will be publicly released after paper acceptance.

Pretrained Weights

Checkpoint download: doseg_best.zip

Training

Main training entry: train_doseg.py.

Single GPU:

python train_doseg.pyValidation

python val_doseg.pyText Embedding Preprocessing

We pre-compute the text embeddings for the training set, saved as pestcamouflage_segm_train.pt, using the MobileCLIP text encoder. Simultaneously, we derive the class-level prototypes, saved as pestcamouflage_segm_train_class_embedding.pt.

python tools/pestcamouflage_generate_text_embedding/generate_embeddings.py \

--json_file datasets/PestCamouflage-seg/pestcamouflage_segm_train.json \

--output_dir datasets/PestCamouflage-seg \

--mode bothShowcase

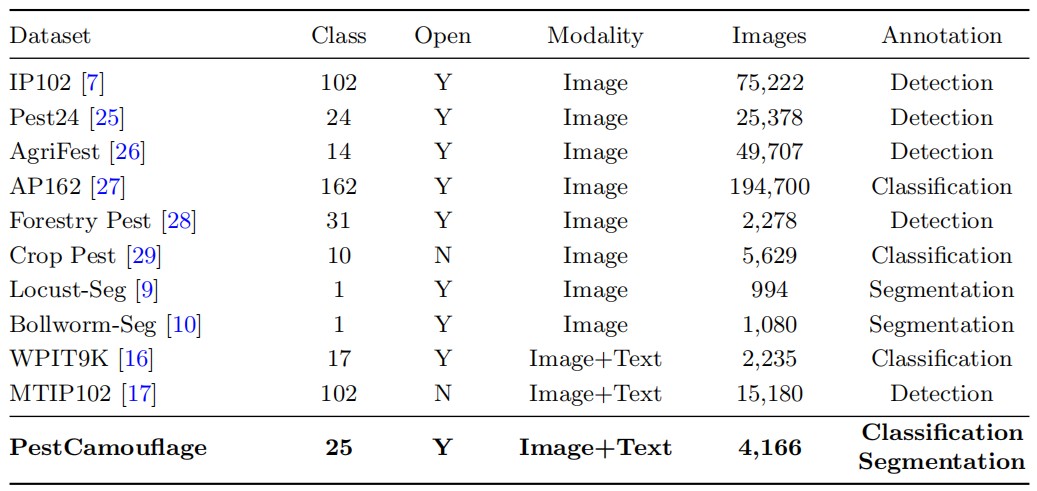

Table 1: Dataset comparison.

Table 1: Comparison with representative pest datasets; PestCamouflage supports camouflaged multi-class instance segmentation with paired text priors.

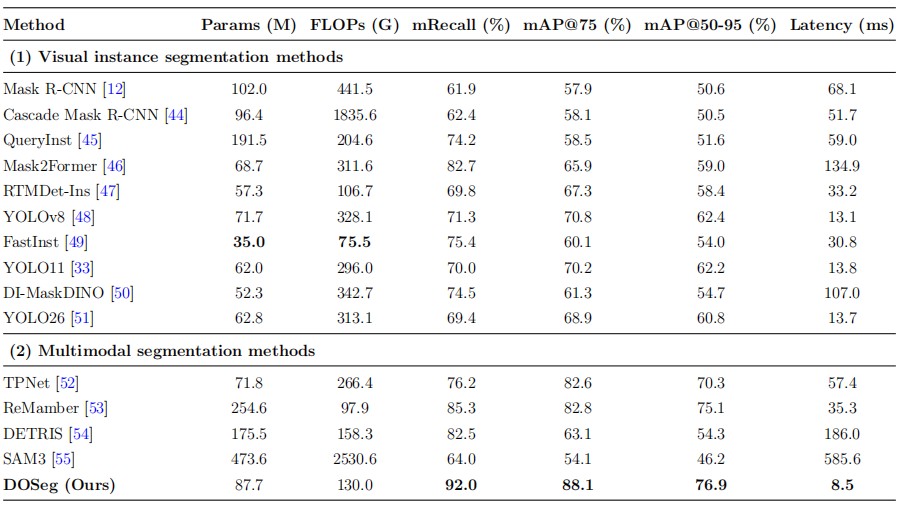

Table 3: Main quantitative comparison on PestCamouflage.

Table 3: Main results on PestCamouflage—DOSeg reaches mAP@50–95 of 76.92% and mAP@75 of 88.08%, with low latency (8.50 ms).

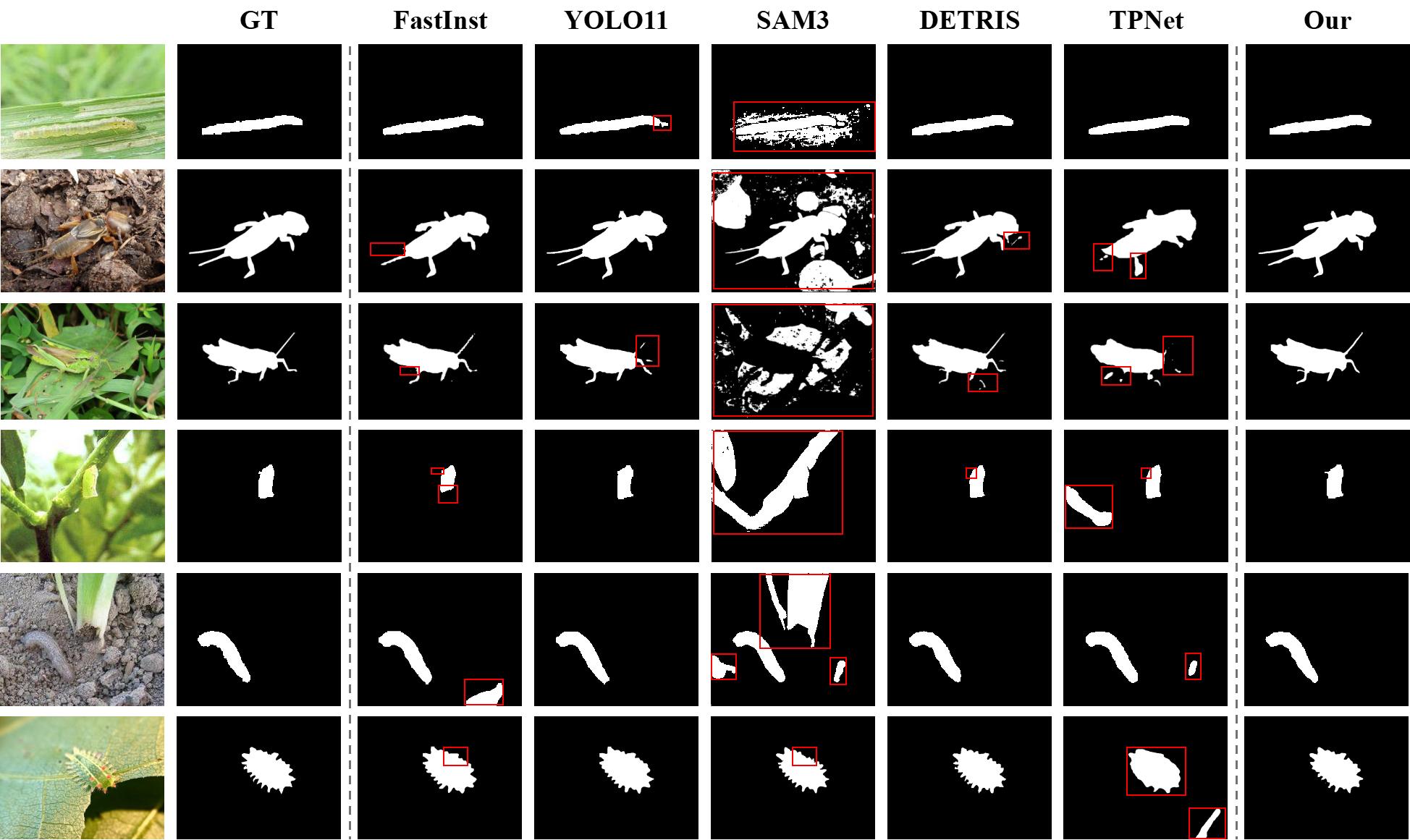

Figure 3: Qualitative prediction examples.

Figure 3: Qualitative comparisons in camouflaged scenes show stronger object completeness, cleaner boundaries, and fewer background false positives.