About DOSeg

Why DOSeg?

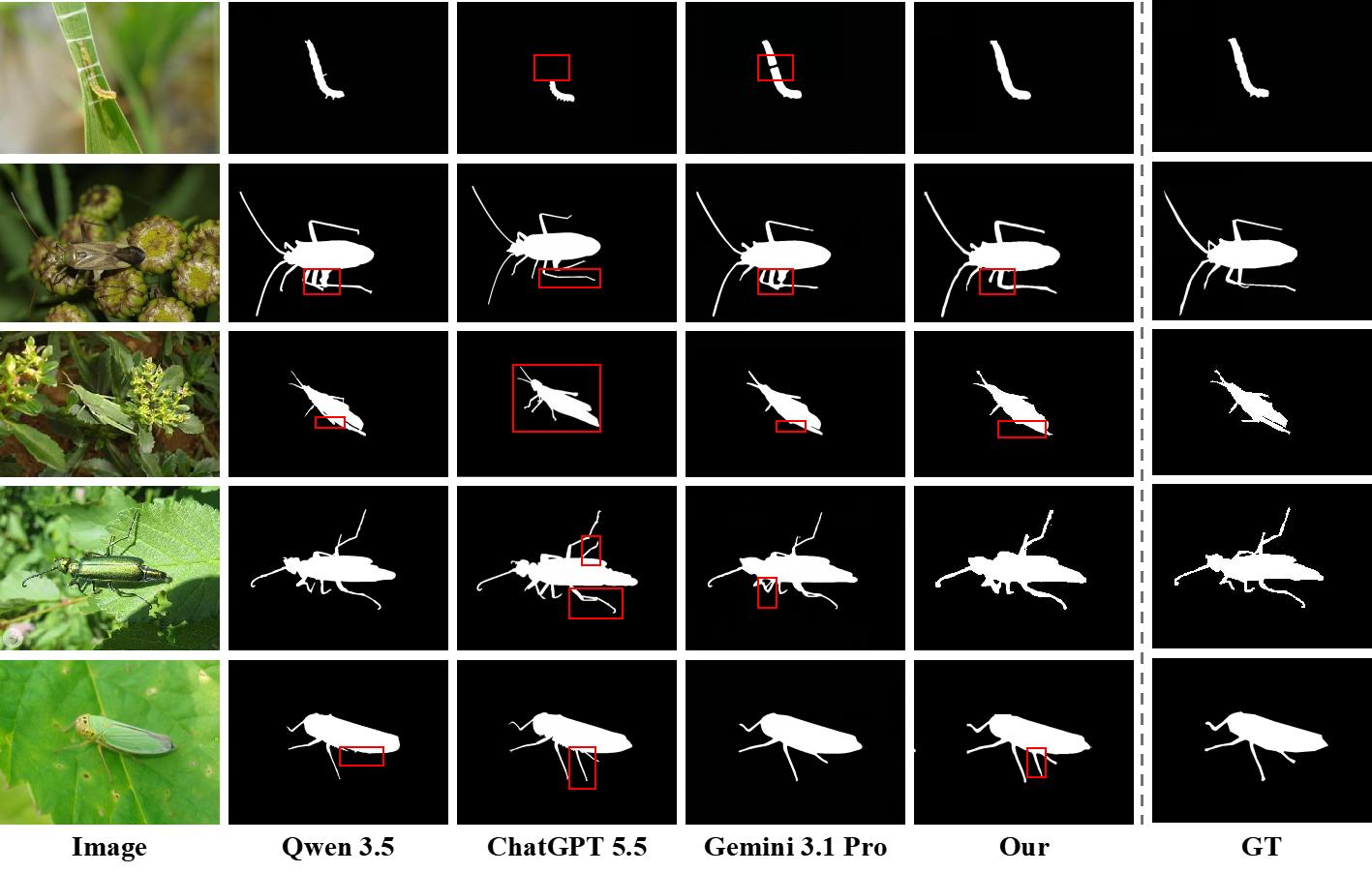

To examine the applicability boundaries of general-purpose multimodal large language models (MLLMs) in pixel-level camouflaged pest perception, this study evaluates three representative MLLMs: Qwen 3.5, ChatGPT 5.5, and Gemini 3.1 Pro. Their segmentation- or image-editing-style outputs are qualitatively compared with DOSeg and ground-truth annotations (Figure 1).

Rather than serving as a controlled quantitative benchmark against supervised instance segmentation models, this comparison focuses on whether general-purpose multimodal priors can provide stable pixel-level semantic alignment in fine-grained camouflaged agricultural scenes.

The results indicate that MLLMs possess strong open-domain visual-semantic understanding, but their outputs are often dominated by general prior knowledge. For example, the locust mask generated by ChatGPT 5.5 resembles a generic locust-like reconstruction rather than the actual object boundary.

Other MLLMs also confuse leaf veins, shadows, or branch-and-leaf textures with pest structures, leading to missing regions, false positives, and local shape mismatches. These observations suggest that general semantic priors can support coarse target awareness, but they remain insufficient for accurate pixel-level pest delineation under severe camouflage.

Figure 1: Qualitative comparison of MLLM outputs with DOSeg and ground truth in camouflaged pest scenes (illustrative; not a controlled quantitative benchmark against supervised instance segmentation).

By contrast, DOSeg introduces domain-knowledge constraints and supervised mask learning for camouflaged pest instance segmentation. Through dual-granularity textual semantics, orthogonal subspace purification, and semantic-guided mask generation, DOSeg strengthens the correspondence between pest-specific image evidence and category semantics. Consequently, it produces masks that better match ground-truth pest boundaries and suppresses false activations from visually similar backgrounds. Compared with general-purpose segmentation- or image-editing-style outputs, DOSeg provides a more task-specific and controllable solution while maintaining efficient inference characteristics, making it better aligned with the requirements of resource-constrained agricultural perception scenarios such as UAV scouting, farm machinery terminals, and mobile field monitoring.

Results and Practical Value

On PestCamouflage, DOSeg reaches mAP@50–95 = 76.92%, mAP@75 = 88.08%, and mRecall = 92.00% while maintaining practical edge-side latency.

This balance improves reliability of class-aware masks under camouflage and supports more actionable precision-protection decisions in real agricultural workflows.

Future Perspectives

Toward intelligent crop protection, DOSeg can serve as a domain-knowledge-guided perception module for early pest monitoring and precision intervention in complex field environments. For camouflaged pests, instance-level masks provide finer spatial evidence than coarse localization, supporting pest occurrence mapping, population distribution estimation, and early hotspot identification.

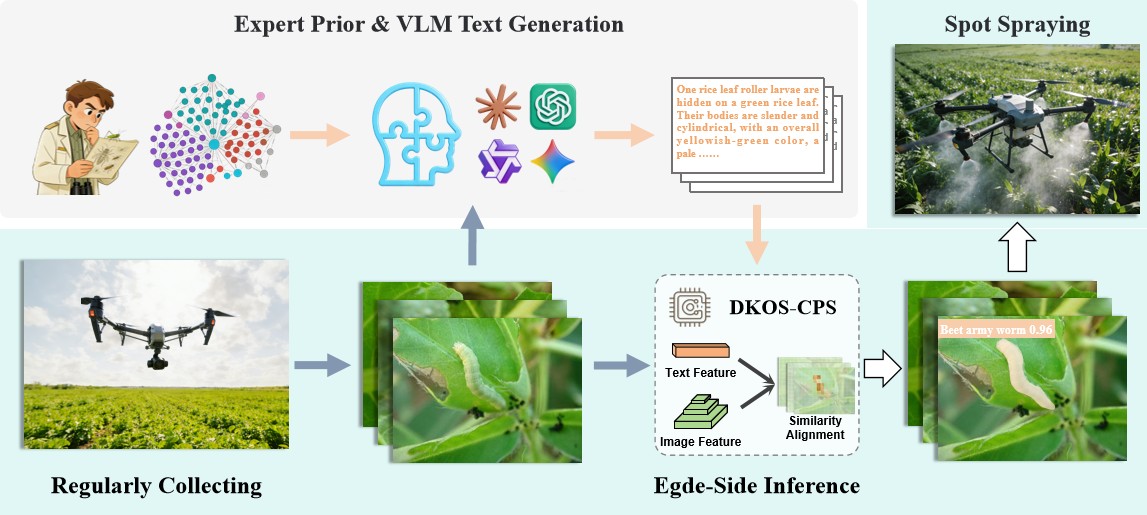

As shown in Figure 2, DOSeg can be integrated into a prospective crop-protection pipeline with UAV scouting, knowledge graphs, and advanced vision-language large models (VLLMs). In this pipeline, UAVs or mobile platforms periodically collect crop imagery, while cloud-side VLLMs generate structured textual descriptions under expert-prior and knowledge-graph constraints.

DOSeg then performs edge-side semantic-guided inference and class-aware mask prediction, converting camouflaged pest observations into pixel-level distribution maps and risk-aware decision cues. These outputs can support early warning, prioritized field scouting, prescription map generation, and site-specific intervention, with precision spraying serving as only one possible downstream operation. Future work can further extend this framework through pest category expansion, cross-domain adaptation, few-shot updating, and multi-temporal environment-aware pest analysis.

Figure 2: Conceptual overview of the DOSeg-centered intelligent crop protection pipeline for early pest monitoring and site-specific intervention.

Research Team

Smart Agriculture and Multimedia Lab (SAMLab), Guizhou University.

Contact: administer@samlab.cn

Project site: http://dkos.samlab.cn/

Supplementary note. Performance may shift on rare species or changing field conditions. Qwen3-VL drafted candidate text only; all final annotations were manually reviewed.